Micro vs macro benchmarking

- Micro vs macro benchmarking is a recurrent topic on Hacker News. People debate on whether they should measure the performance of a small piece of code or the performance of their whole app. Micro benchmarking is certainly better than macro benchmarking. But it is not always the case.

- Let’s rephrase the question:

- What makes micro benchmarking better than macro benchmarking?

- The answer is simple: code coverage!

Code coverage

-

- Code coverage is the percentage of your code that is covered by your tests. If you have 10 tests, and each test covers 50% of the code, you have a code coverage of 50%.

- The more code is covered by your tests, the less risk you have to introduce a regression. The less code is covered by your tests, the more risk you have to introduce a regression.

- Let’s say you are working on a new feature. You are testing it with a couple of unit tests. You run your tests, and everything is fine. Then you launch your new feature and you notice that some of your users are experiencing a bug you didn’t see before.

- You realize that your tests are not enough, and you need to write more tests to cover this new feature. Because you are confident with your micro-benchmarks, you decide to write more integration tests. This will take you a couple of hours. It will be worth it, isn’t it?

- Later that day, you perform the same micro-benchmarks, and you notice that your new feature is slower than the old one. You spend another hour to write more micro-benchmarks. You make your code faster.

-

- You are happy and proud of yourself for having “improved” your code. You went from 50% code coverage to 80% code coverage.

- With micro-benchmarks, you would have noticed the regression before you launch a new feature as well as increase your code coverage.

Share post

Build better, faster software

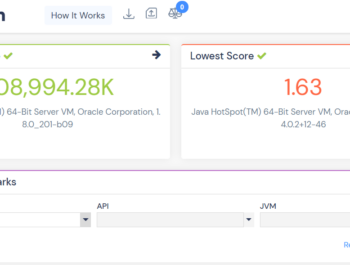

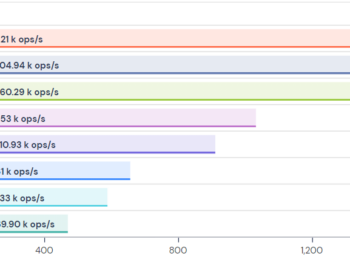

Benchmark your Java stack, code, 3rd party libraries, APIs.

Get Started